Project Overview

Reactive Autonomy in Action

Boe-Bot King is an autonomous line-following robot built to explore reactive sensor-based control and real-time decision making. Using dual QTI infrared sensors and simple collision whiskers, the robot continuously interprets its environment and selects motion behaviors without relying on complex state machines or path planning.

The project emphasizes reliability, predictability, and fast response—core principles behind many real-world autonomous systems. Simple doesn't mean simplistic; it means intentionally designed for robustness and clarity.

Mission Objective

The Challenge

Design and implement a fully autonomous line-following robot capable of navigating a constrained obstacle course while maintaining accurate path tracking and recovering from collisions or loss of line detection.

Success was measured not just by completion, but by consistency—could the robot reliably execute the same behavior under the same conditions? In autonomous systems, predictability is often more valuable than complexity.

Hardware Arsenal

The Robot's Toolkit

Boe-Bot Chassis

Mobile platform with differential drive system

Dual QTI Sensors

Infrared reflectance sensors for line detection

Whisker Sensors

Contact-based collision detection system

Feedback Systems

Piezoelectric speaker and status LEDs

BASIC Stamp

Onboard microcontroller for autonomous operation

LCD Display

Real-time debugging and status feedback

Behavior Decision Mapping

Sensor-to-Action Strategy

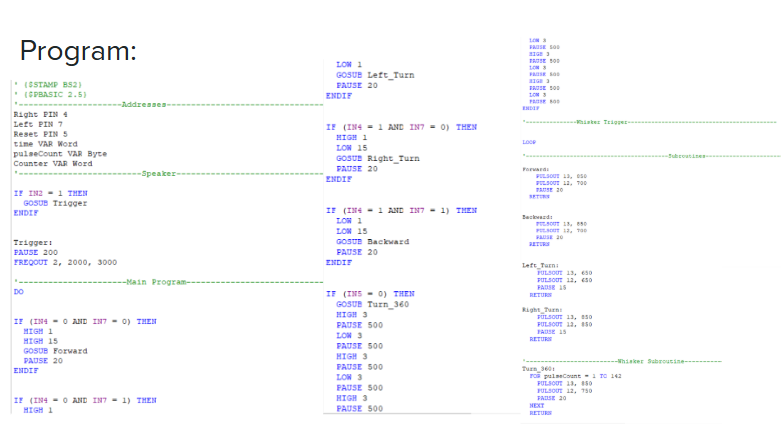

Instead of using a complex state machine, Boe-Bot King relies on a direct sensor-to-action mapping strategy. Each motion decision is selected based on the real-time state of the QTI and whisker sensors, resulting in fast, predictable behavior and easier debugging during hardware testing.

| Left QTI | Right QTI | Whiskers | Robot Action |

|---|---|---|---|

| No Line | No Line | Clear | Move Forward |

| Line | No Line | Clear | Turn Left |

| No Line | Line | Clear | Turn Right |

| Line | Line | Clear | Reverse |

| Any | Any | Triggered | 360° Recovery Turn |

This rule-based mapping avoids complex state machines and simplifies debugging during iterative hardware testing. Each behavior is deterministic and traceable.

Control Architecture

Subsumption-Based Behavior

The robot operates using a reactive control strategy in which motor commands are selected based on real-time sensor readings. Each behavior is intentionally simple, which reduces ambiguity during testing and makes hardware-related issues easier to isolate.

- Forward: No line detected by either QTI sensor

- Left Turn: Line detected by left QTI sensor

- Right Turn: Line detected by right QTI sensor

- Reverse: Line detected by both QTI sensors

- 360° Turn: Triggered by whisker collision

Rather than using a complex state machine, this project adopts a subsumption architecture to prioritize responsiveness and fault tolerance. Each behavior layer operates independently, allowing the robot to react instantly to critical events without waiting for centralized logic.

Software Design

Modular & Maintainable

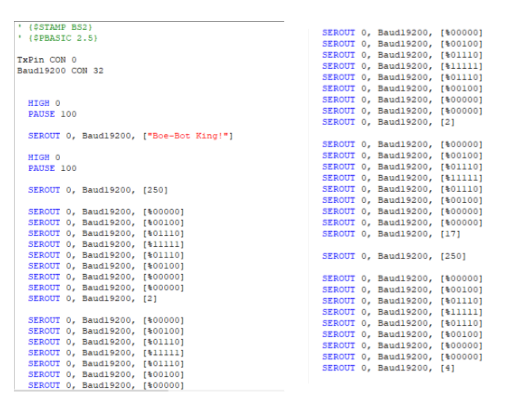

The software is organized into modular subroutines, each responsible for a single motion behavior. Sensor states are evaluated continuously, and the appropriate routine is executed without blocking the main loop.

This structure supports rapid iteration, repeatable behavior, and clear separation between sensing and actuation logic—an approach commonly used in embedded robotics systems.

Pulse counters were used to regulate turning angles and prevent over-rotation, while an onboard LCD provided real-time feedback during debugging and testing.

Testing & Performance

Real-World Results

Through iterative testing and calibration, the robot consistently completed the obstacle course. Proper sensor alignment and ground clearance proved critical to maintaining reliable line detection.

What looked simple in theory required careful physical tuning in practice—a reminder that autonomous systems live or die by their hardware implementation, not just their code.

Engineering Challenges

Problems & Solutions

Sensor Inconsistency

Inconsistent QTI sensor readings → sensor height adjusted using washers and alignment was carefully tuned

Over-Rotation

Over-rotation during turns → calibrated pulse counters and optimized timing sequences

Logic Errors

Software logic errors → resolved through on-hardware debugging and iterative testing

These challenges highlighted how small physical changes can have large impacts on autonomous behavior. A few millimeters of sensor height adjustment made the difference between reliable detection and complete failure.

Lessons Learned

Takeaways That Shaped Future Work

This project reinforced the importance of precise sensor placement, modular embedded software design, and iterative hardware-software integration. It also showed that simple control logic can outperform more complex approaches when reliability and predictability matter most.

Furthermore, this project demonstrated that intelligent behavior does not require complex algorithms. Well-structured priorities and clean behavior separation can produce robust autonomy with minimal computation.

These lessons carried directly into later projects like AfroBoat and AFROBOT, where subsumption architectures and sensor reliability became foundational design principles.

Documentation

Code & Demonstration

Code Implementation

Live Demonstration

Conclusion

Foundation for Future Work

Boe-Bot King represents my first deep exposure to autonomous behavior design. The lessons learned here—especially around sensor reliability and reactive control—directly influenced later projects such as AfroBoat and AFROBOT, where these principles were expanded into layered behavioral architectures and full system integration.

This wasn't just a class project. It was the beginning of understanding how to build systems that respond intelligently to the real world, not just theoretical scenarios.